Understanding Where LLMs Go Wrong and Why It Matters

Where LLMs Go Wrong: Experiences from Building Automated Due Diligence Tools

At Threat Digital, we use large language models (LLMs) to process hundreds of thousands of documents every day in support of automated due diligence and compliance workflows. We’ve seen firsthand how generative AI (GenAI) can speed up research, identify risks, and surface useful insights.

We’ve also seen how it can fail.

This post outlines the three most common ways LLMs can fall short, based on real-world experience building and testing AI.

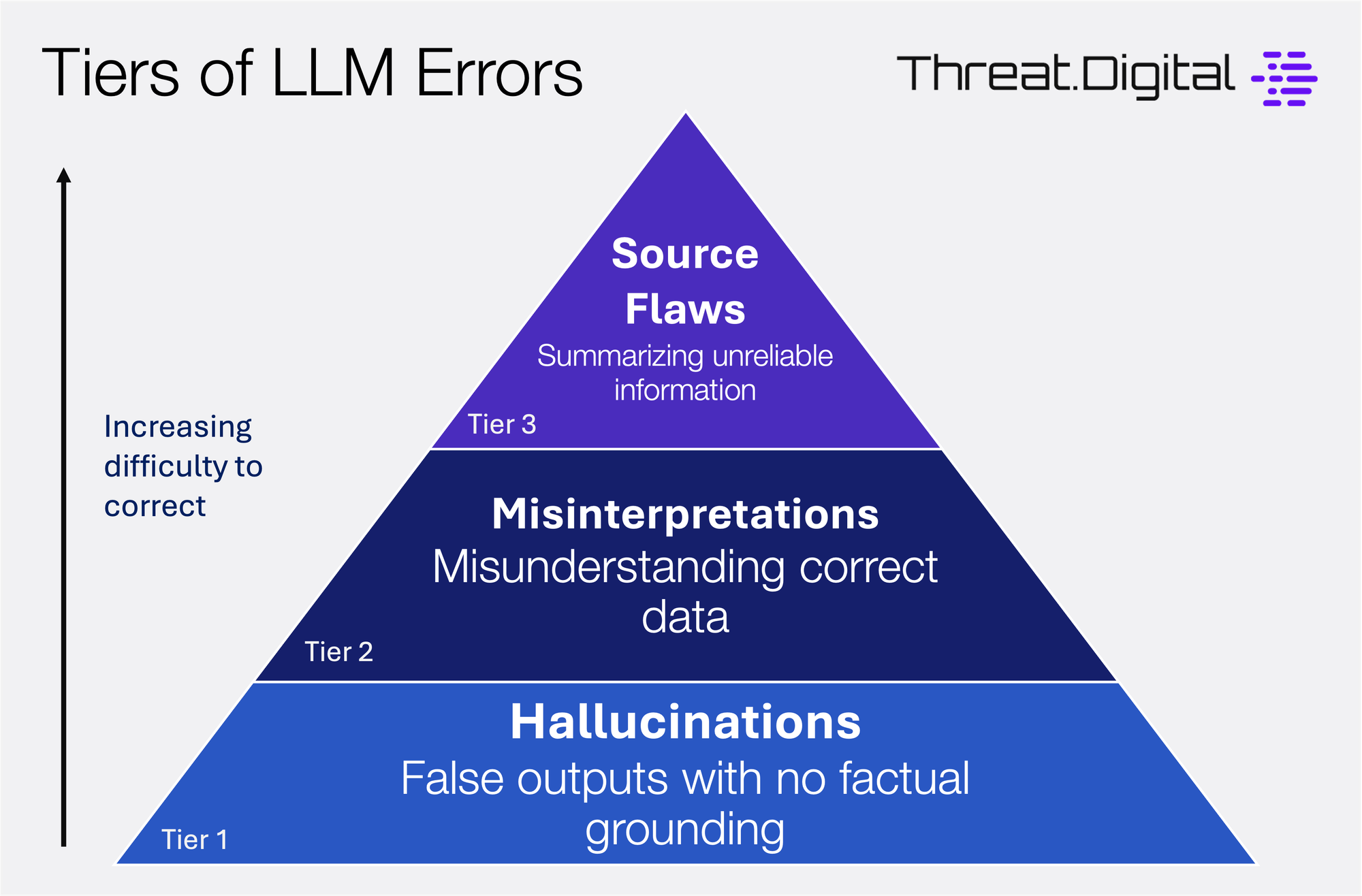

Hallucinations: When the AI Model Makes Things Up

The most common LLM error that most people are now aware of is hallucinations. A hallucination occurs when a model generates text that sounds accurate but is entirely false. These kinds of errors happen when the model doesn't have the information it needs and still attempts to fill in the gaps.

Examples include:

- Citing non-existent news articles or URLs

- Inventing the name of a regulator or enforcement agency

- Summarizing an imaginary enforcement action

These responses are often written in a confident tone, which makes them hard to spot unless you’re paying close attention. LLMs are not meant to be fact machines, and shouldn't be treated as such.

Misinterpreting Real Information

The leading technical approach to reducing hallucinations (and one we utilize in our solutions) is to 'ground' the AI model with external information. This process involves searching for data relevant to the query, and then feeding that data to the model so it can generate a more accurate response. If you’ve ever used an LLM that includes links to actual web sources, that’s exactly what’s happening.

Grounding significantly reduces hallucinations, but it isn’t foolproof. In some cases, the model has access to correct information but still produces inaccurate results. This usually happens when it misinterprets the source or misses important context.

For instance, it might:

- Pull a quote out of context, shifting its meaning entirely

- Confuse individuals in a news article with similar names, such as a father and son, or siblings with matching initials

- Misidentify the subject of a report, such as swapping the victim and perpetrator in a summary

The tricky part is that the output cites a correct source. That’s why human review still matters. Users should always verify key claims by checking the linked sources, especially when using LLMs for due diligence or compliance research.

Accurate Analysis of Incorrect Information

The final situation is the most challenging to solve, especially from a technical perspective. In some cases, the AI system does everything correctly, it finds the relevant source and summarizes the information accurately, but the source itself is flawed.

This often happens when dealing with open web content. Some common issues include:

- Outdated content that no longer reflects the most updated facts

- News written with clear bias or a particular agenda

- Misinformation or unverified claims that appear on low-quality sites

In these cases, the model may be technically accurate, but the result is still misleading. Since LLMs don’t evaluate credibility the way a human can, it’s important for users to verify both the content and the quality of the source before relying on the output.

Final Thoughts

LLMs and generative AI are powerful tools for automating due diligence and compliance research, but they aren’t perfect. Their outputs must be treated with care.

If you're using LLMs in high-stakes environments, the key is to:

- Ground responses in traceable data

- Check for misread context, not just factual accuracy

- Build processes that include human oversight and source validation

Understanding how LLMs fail is just as important as knowing what they can do well.